Lessons from reliability and bathtub curve for busting crimes

Frauds and terrorism

Formidable military cantonments with awe-inspiring security have existed for centuries. Treasure, including notes and coins, have also moved for centuries. Banks have time-honoured systems and processes for internal control and audit.

Nevertheless, we hear of security breaches in military establishments. The choice of time, location, and other details point to a high level of planning. Daring and well-planned train robberies involving sovereign treasure make some Western flicks seem like minor pickpocketing. Bank frauds the size of several Satyam frauds point to governance failures from top to bottom. Investigations followed, and responsibility fixed. The immediate causes were familiar. System failures, noncompliance with laid down procedures, and ignoring established norms. One rarely identified root causes to see why noncompliance took place. And what actions could prevent them in the future.

Reliability engineering

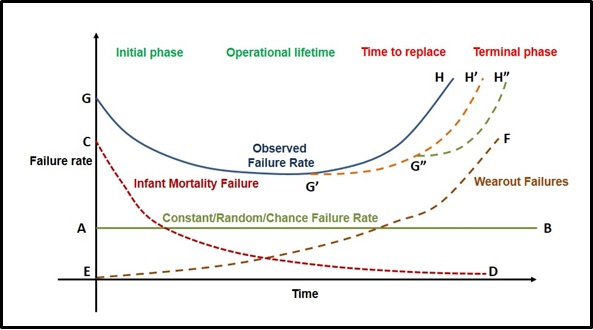

Reliability engineering shows that processes may have a limited life before they become due for an overhauling or a thorough recheck. The bathtub curve below best describes this.

Learning effect

Over time, any process may have an average failure rate, as AB shows. There could be higher failures in the initial stages when machines are new and have teething problems. Those using them are also inexperienced and still learning. The failure rate will later decrease due to the learning effect. The CD in the graph shows this.

The now successful UPI payments also saw a spate of frauds in its initial few weeks when some people exploited loopholes in the product design.

Wearout failures

At the same time, failures could increase during the same period for altogether different reasons. For example, wear and tear of machinery and poor maintenance may increase failures. The curve EF explains this.

In finance, there are two reasons why EF happens. First, when indifference of those in charge of controls causes their failure. When no fraud has occurred in a long time, the gatekeepers might relax one or two out of, say, four or five controls. They might do this when they are not aware of the rationale for such controls.

Secondly, those who want to commit fraud would learn the loopholes after studying the process over a period. These fraudsters could include those in charge of the controls. They might be leaving the door open for others to perpetrate fraud.

In a military establishment, if the distance between two watchtowers was constant at 500 metres for over a century, it is easy for infiltrators to plan their strategy. When cantonment security never checks personnel in their uniform, infiltrators’ job is easy. When those who planned the September 11 attacks learnt that airport checks were lax for frequent fliers, the key players crisscrossed the world to become frequent fliers.

The decreasing, and constant failure rates, in the earlier stages, will also contribute to complacency in the last phase.

Bathtub curve

The combined effect of the three curves is the bathtub curve, GH. The actual slope at various points in the curve would vary from situation to situation. The curve indicates that any process, after the initial phase and its operational lifetime, has a period of constant failure rates. After that, it reaches a stage of increasing failures calling for a replacement. Beyond this stage comes a terminal phase, when losses are likely to recur with more significant periodicity.

Saving the day

But, then, all is not lost. It is possible to postpone the day of reckoning. In engineering, periodical maintenance is the answer. Beyond a point, one has to go in for new machinery.

In finance, one can extend the life of the process as indicated by G’H’ and G”H”, But the effects could be short-lived. Periodical training, reiterating, and strengthening the controls are means to postpone the curve. Beyond a stage, the entire process will need to be changed. When I joined the Reserve Bank of India, its manual currency processing had long outlived its effectiveness. Certain checks and balances like note verification had ceased to serve any purpose. The Nayak Committee on Currency Management recommended mechanisation. But, it took an outsider, Vepa Kamesam, as Deputy Governor to rethink and implement a modernised currency management system.

Lessons from bathtub curve

What lessons do the bathtub curve model offer to prevent fraud and other operational risk events?

First, no major breach or failure having occurred over decades is no reason why one should not revisit processes. For example, if currency note remittances from the press had no shortages earlier, it is no reason to assume that the next one will not have one. One should periodically revisit processes to ensure they are robust and in keeping with changing times and varying threat perceptions.

Secondly, one needs to reexamine the effectiveness of existing controls, including guarding against the possibility of complacence weakening controls.

Thirdly, staff in charge of critical controls require periodical training. This cuts across levels. The training should sensitise them to the continuing relevance of controls. For example, one security guard prevented a large theft within days of training. He was applying one of the lessons learnt.

Fourth, every employee is responsible for the success of operational risk controls. However, it is not the sole responsibility of those in charge of critical controls.

Fifth, there is a need to reactivate the system of whistleblowers and make it more effective.

Sixth, we require cultural changes to ensure that compliance with rules and regulations is the norm. The system looks down upon deviations and instances of “paper compliance”. Wherever necessary, people should escalate deviations to appropriate levels for corrective measures.

© G Sreekumar 2022. Updated 6 May 2022.

For periodical updates on all my blog posts, subscribe for free at the link below:

https://gsreekumar.substack.com/

![]()